NEWS

-

Shared task has ended. Please refer to the deadlines related to the system paper submission.

-

Final results submission deadline extended to -- March 15, 2022, noon UTC

-

The “unseen test set” has now been released.

-

Please note: Final metric to be used for evaluation is "macro-F1", and NOT "weighted-F1".

-

New dataset for "US Politics" now released!

-

We will soon be releasing the training/validation set for “US Politics domain”. Stay tuned!

-

The training/validation dataset for “Covid19” domain is now released.

ABOUT THE SHARED TASK

For worshop details please visit: http://lcs2.iiitd.edu.in/CONSTRAINT-2022/

- Task: Hero, Villain and Victim: Dissecting harmful memes for Semantic role labelling of entities

Given a meme and an entity, determine the role of the entity in the meme: hero vs. villain vs. victim vs. other. The meme is to be analyzed from the perspective of the author of the meme.

-

Role labeling for memes: This task emphasizes detecting which entities are glorified, vilified or victimized, within a meme. Assuming the frame of reference as the meme author's perspective, the objective is to classify for a given pair of a meme and an entity, whether the entity is being referenced as Hero vs. Villain vs. Victim vs. Other, within that meme.

We have publicly released datasets spanning 2 domains, Covid-19 and US Poltics.

-

Definition of the entity classes:

- Hero: The entity is presented in a positive light. Glorified for their actions conveyed via the meme or gathered from background context

- Villain: The entity is portrayed negatively, e.g., in an association with adverse traits like wickedness, cruelty, hypocrisy, etc.

- Victim: The entity is portrayed as suffering the negative impact of someone else’s actions or conveyed implicitly within the meme.

- Other: The entity is not a hero, a villain, or a victim.

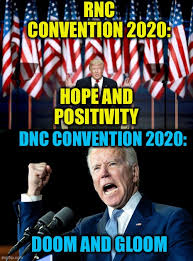

Example 1

Corresponding JSON Line input:

Corresponding JSON Line input:

{

‘image’ : memes_1486.png’,

‘OCR’: "AAE RNC\nCONVENTION 2020:\nHOPE AND\nPOSITIVITY\nDNC CONVENTION 2020:\nDOOM AND GLOOM\n",

‘hero’ : ['Donald Trump'],

‘villain’ : ['Joe Biden'],

‘victim’ : [],

‘other’ : ['Democratic National Convention (DNC)’, 'Republican National Convention (RNC)']

}

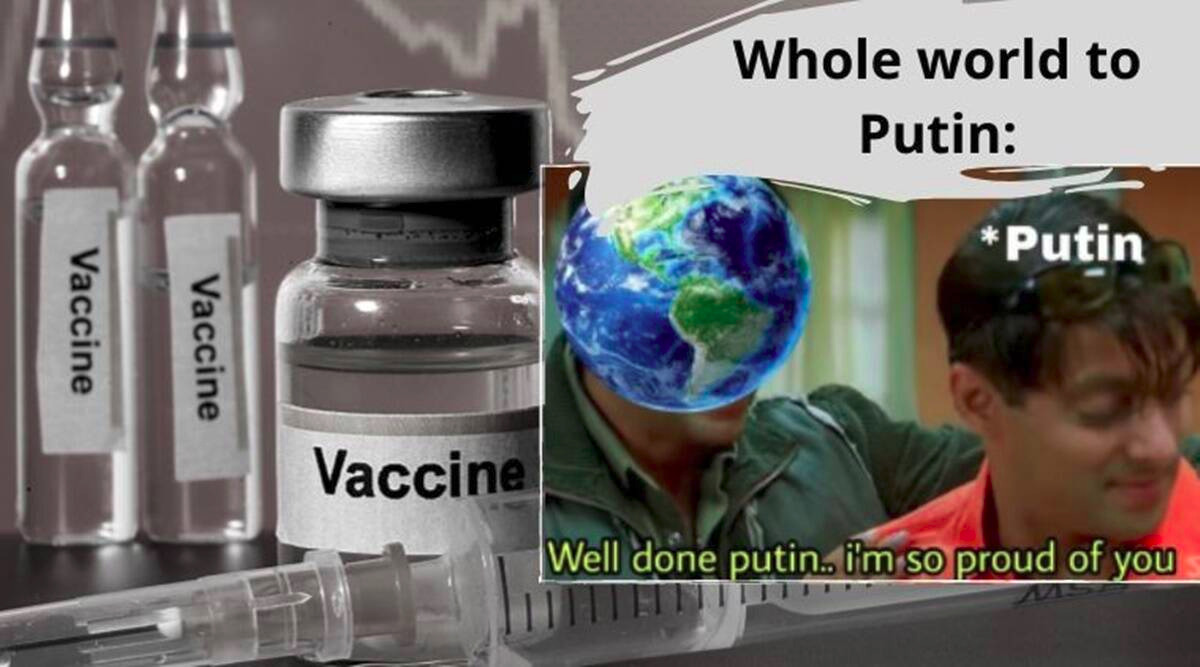

Example 2

Corresponding JSON Line input:

Corresponding JSON Line input:

{

‘image’ : ‘image_2.png’,

‘OCR’ : "Whole world to\nPutin:\n*Putin\nVaccine\nWell done putin. i'm so proud of you\nVaccine\nVaccine\n",

‘hero’ : [‘Vladimir Putin’],

‘villain’ : [],

‘victim’ : [],

‘other’ : [‘the world’, ‘Salman Khan’, ‘vaccine’]

} -

Evaluation Metric:

The official evaluation measure for the shared task is the macro F1 score for the multi-class classification.

Please note, the evaluation of the submissions will be done on an unseen test set, that is a combination of data from both "Covid-19" and "US Politics".

-

Contest and Dataset:

https://codalab.lisn.upsaclay.fr/competitions/906

-

Unseen test set: The “unseen test set” file and the corresponding image set will have samples from both Covid-19 and the US Politics domain.

{

‘image’ : memes_1486.png’,

‘OCR’: "AAE RNC\nCONVENTION 2020:\nHOPEAND\nPOSITIVITY\nDNC CONVENTION 2020:\nDOOM AND GLOOM\n",

‘entity_list’ :['Donald Trump', 'Joe Biden','Democratic National Convention (DNC)’, 'Republican National Convention (RNC)']

}

{‘image’ : ‘image_2.png’,

‘OCR’ : "Whole world to\nPutin:\n*Putin\nVaccine\nWell done putin. I'm so proud of you\nVaccine\nVaccine\n",

‘entity_list’ : [‘Vladimir Putin’, ‘the world’, ‘Salman Khan’, ‘vaccine’]

}

-

Submission:

Instructions for prediction file submission at Codalab shared-task server:

- The submission NEEDS to be a .zip, with a .jsonl predicted results file as its ONLY content.

- Filenames could be anything but should conform to "samplefilename.jsonl".

- Please DO NOT include anything other than your only .jsonl prediction result file.

- Please ensure ALL the test samples (meme image) AND the corresponding entities enlisted in the unseen set, are considered for prediction and appear in your submission file. Please, do NOT leave out any entity unpredicted.

For the samples depicted for the unseen test set, the submission format would look like:{

‘image’ : ‘image_name’,

‘hero’ : [entity_list],

‘villain’ : [entity_list],

‘victim’ : [entity_list],

‘other’ : [entity_list]

}

{

‘image’ : memes_1486.png’,

‘hero’ : ['Donald Trump'],

‘villain’ : ['Joe Biden'],

‘victim’ : [],

‘other’ : ['Democratic National Convention (DNC)’, 'Republican National Convention (RNC)']

}

{

‘image’ : ‘image_2.png’,

‘hero’ : [‘Vladimir Putin’],

‘villain’ : [],

‘victim’ : [],

‘other’ : [‘the world’, ‘Salman Khan’, ‘vaccine’]

}

In case of multiple submissions by a team, we shall consider the best submission prior to the deadline for the final evaluation. No exceptions shall be made.

-

Role labeling for memes: This task emphasizes detecting which entities are glorified, vilified or victimized, within a meme. Assuming the frame of reference as the meme author's perspective, the objective is to classify for a given pair of a meme and an entity, whether the entity is being referenced as Hero vs. Villain vs. Victim vs. Other, within that meme.

Follow Us